Randomness and structure in neural representations for learning - Ashok Litwin-Kumar

Randomness and structure in neural representations for learning

Ashok Litwin-Kumar

Postdoc, Center for Theoretical Neuroscience, Columbia University

Stanford Neurosciences Institute Statistical and Computational Neuroscience Faculty Candidate

Abstract:

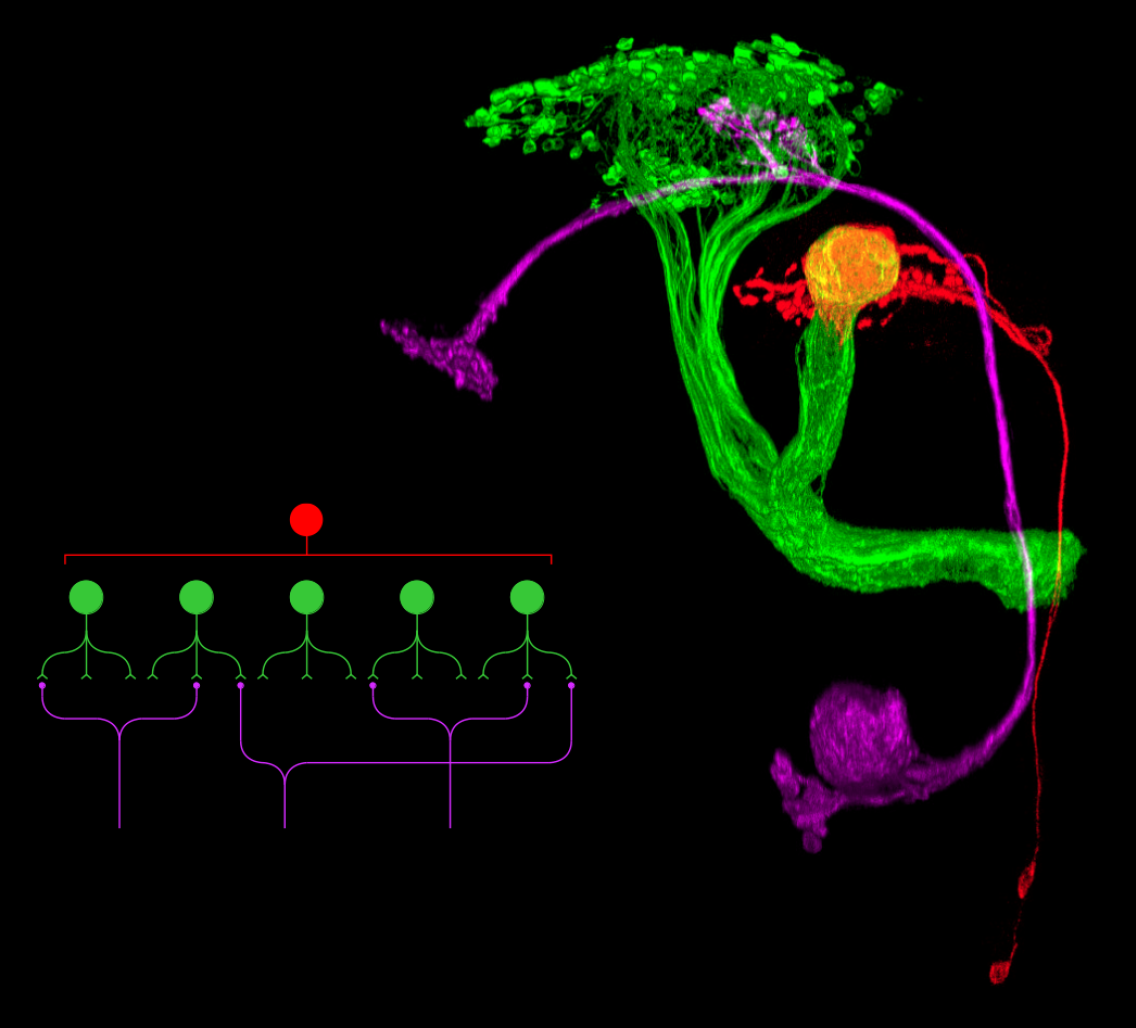

Synaptic connectivity varies widely across neuronal types, from the hundreds of thousands of connections received by cerebellar Purkinje cells to the handful received by granule cells. In this talk, I will discuss recent work that addresses what determines the optimal number of connections for a given neuronal type and what this means for neural computation. The theory I will describe predicts optimal values for the number of inputs to cerebellar granule cells and Kenyon cells of the Drosophila mushroom body, shows that random wiring can be optimal for certain computations, and also provides a functional explanation for why the degrees of connectivity in cerebellum-like and cerebrocortical systems are so different. I will also discuss applications of the theory to an analysis of a complete electron-microscopy reconstruction of the larval Drosophila mushroom body and to recordings of cortical activity in mice performing a decision-making task. These analyses point toward new ways of analyzing neural data and provide a framework for understanding the neural representations that support learned behaviors.

Bio

Ashok Litwin-Kumar received a BS in physics from Caltech and a PhD in computational neuroscience from Carnegie Mellon University, where he wasadvised by Brent Doiron. He is currently a postdoctoral fellow at the Center for Theoretical Neuroscience at Columbia University, supervised by Larry Abbott and Richard Axel, where his research has focused on neural representations that support learned behavior.